|

3/4/2023 0 Comments Hive pigg oozie projects tasks

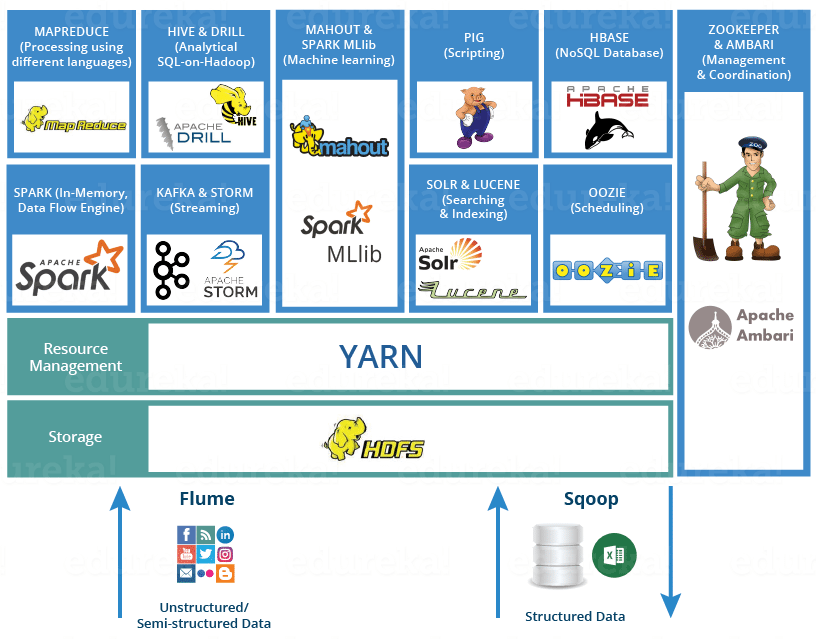

usr/lib/oozie/bin/oozie job -oozie -run -config apps/map-reduce/job. Now go toe examples folder, run the examples using the commad: It is integrated with the Hadoop stack, with YARN as its architectural center, and supports Hadoop jobs for Apache MapReduce, Apache Pig, Apache Hive. Skills : Apache Hadoop, HDFS, Map Reduce, Hive, PIG, OOZIE, SQOOP, Spark. Hive and SQL can be used for processing and analyzing. The specific duties mentioned on the Hadoop Developer Resume include the. In MapReduce we have to write lengthy complex codes but in Hive and Pig we use SQL like queries to analyze datasets. Instead of MapReduce we can use them both as it is simpler then MapReduce. schedule a job in advance and create a pipeline of individual jobs was executed sequentially or in parallel to achieve a bigger task using Oozie. Apache Pig and Hive both are used to analyze large datasets. on real world Big Data projects and troubleshooting day to day challenges. Oozie is a workflow scheduler system that allows users to link jobs written on various platforms like MapReduce, Hive, Pig, etc. Oozie ooziedb.sh oozied.sh oozie-env.sh oozie-run.sh oozie-setup.sh oozie-start.sh oozie-stop.sh bin]# bin]#. YARN, MapReduce, Hive, Pig, HBase, Spark, Kafka, Oozie, Flume and Sqoop. Oozie is integrated with the rest of the Hadoop stack supporting several types of Hadoop jobs out of the box (such as Java map-reduce, Streaming map-reduce, Pig. The reason why we need to move the 'share' folder with the user with which we start the oozie service and workflow is, the oozie client looks for the share libraries using the folder - '/user/$/share/lib'.Īfter all these setting, restart the oozie siervce (with root bin]# ls Here is the command to move share folder to hdfs - 'hadoop fs -put share share'.Īlso make sure that in '/usr/lib/oozie/conf/oozie-default.xml' the property '.' value is 'true', also add the another proper on the same file '' with value 'true'. One thing you need to make sure that if you are running the oozie workflow job with the user 'root' then you have to move the 'share' fore to hdfs using the same user (root) then only it works. You can see folder '/usr/lib/oozie/ /' untar it, you can see folder 'share' now you need to move this to the hdfs folder 'share'.

The issue here is, oozie client is not able to find all required oozie libraries to run the oozie workflow, so you need to place the oozie libraries in hdfs file system.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed